| |

Web server

Web Design & Development Guide

Web server

Home | Up

The inside/front of a

Dell PowerEdge web server

The term

Web

server can mean one of two things:

- A

computer program that is responsible for accepting

HTTP

requests from clients, which are known as

Web

browsers, and serving them HTTP responses along with optional data

contents, which usually are Web pages such as

HTML documents

and linked objects (images, etc.).

- A

computer that runs a computer program which provides the functionality

described in the first sense of the term.

Common features

Web Server Hosting the

My Opera Community site on the Internet

Although Web server programs differ in detail, they all share some basic

common features.

- HTTP: every Web server program operates by accepting HTTP

requests from the network, and providing an HTTP response to the requester.

The HTTP response typically consists of an HTML document, but can also be a

raw text file, an image, or some other type of document (defined by MIME-types);

if something bad is found in client request or while trying to serve the

request, a Web server has to send an error response which may include some

custom HTML or text messages to better explain the problem to end users.

-

Logging: usually Web servers have also the capability of logging some

detailed information, about client requests and server responses, to log

files; this allows the Webmaster to collect statistics by running log

analyzers on log files.

In practice many Web servers implement the following features too.

-

Authentication, optional authorization request (request of user name and

password) before allowing access to some or all kind of resources.

- Handling of not only static content (file content recorded in

server's

filesystem(s)) but of

dynamic content too by supporting one or more related interfaces (SSI,

CGI, SCGI, FastCGI, JSP, PHP, ASP, ASP .NET, Server API such as NSAPI, ISAPI, etc.).

- HTTPS

support (by SSL or TLS) to allow secure (encrypted) connections to the

server on the standard port 443 instead of usual port 80.

- Content

compression (i.e. by

gzip encoding)

to reduce the size of the responses (to lower bandwidth usage, etc.).

-

Virtual Hosting to serve many web sites using one

IP

address.

-

Large file support to be able to serve files whose size is greater

than 2 GB on 32 bit

OS.

-

Bandwidth throttling to limit the speed of responses in order to not

saturate the network and to be able to serve more clients.

Origin of returned content

The origin of the content sent by server is called:

- static if it comes from an existing

file lying on a filesystem;

-

dynamic if it is dynamically generated by some other program or script

or API called by the Web server.

Serving static content is usually much faster (from 2 to 100 times)

than serving dynamic content, especially if the latter involves data

pulled from a

database.

Path translation

Web servers are able to map the path component of a

Uniform Resource Locator (URL) into:

- a local

file

system resource (for static requests);

- an internal or external program name (for dynamic requests).

For a static request the URL path specified by the client is relative

to the Web server's root directory.

Consider the following URL as it would be requested by a client:

http://www.example.com/path/file.html

The client's Web browser will translate it into a connection to

www.example.com with the following HTTP 1.1 request:

GET /path/file.html HTTP/1.1

Host: www.example.com

The Web server on www.example.com will append the given path to the

path of its root directory. On

Unix machines, this

is commonly /var/www/htdocs. The result is the local file system

resource:

/var/www/htdocs/path/file.html

The Web server will then read the file, if it exists, and send a response to

the client's Web browser. The response will describe the content of the file and

contain the file itself.

Performances

Web servers (programs) are supposed to serve requests quickly from more than

one TCP/IP connection at a time.

Main key performance parameters (measured under a varying load of

clients and requests per client), are:

- number of requests per second (depending on the type of request,

etc.);

- latency response time in milliseconds for each new connection or

request;

- throughput in bytes per second (depending on file size, cached or

not cached content, available network bandwidth, etc.).

Above three parameters vary noticeably depending on the number of active

connections, so a fourth parameter is the concurrency level supported by

a Web server under a specific configuration.

Last but not least, the specific server model used to implement a

Web server program can bias the performance and

scalability level that can be reached under heavy load or when using

high end hardware (many CPUs, disks, etc.).

Load limits

A web server (program) has defined load limits, because it can handle only a

limited number of concurrent client connections (usually between 2 and 60,000,

by default between 500 and 1,000) per

IP address

(and IP port) and it can serve only a certain maximum number of requests per

second depending on:

- its own settings;

- the HTTP

request type;

- content origin (static or dynamic);

- the fact that the served content is or is not

cached;

- the

hardware and software limits of the OS where it is working.

When a web server is near to or over its limits, it becomes overloaded

and thus unresponsive.

Overload causes

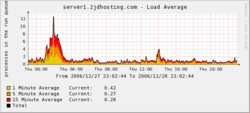

A daily graph of a web server's load, indicating a spike in the load

early in the day.

At any time Web servers can be overloaded because of:

- too much legitimate Web traffic (i.e. thousands or even millions

of clients hitting the Web site in a short interval of time);

- DDoS

(Distributed Denial of Service) attacks;

-

Computer worms that sometimes cause abnormal traffic because of

millions of infected computers (not coordinated among them);

-

XSS viruses can cause high traffic because of millions of infected

browsers and/or

web

servers;

-

Internet web robots traffic not filtered / limited on large web

sites with very few resources (bandwidth, etc.);

- Internet (network) slowdowns, so that client requests are served

more slowly and the number of connections increases so much that server

limits are reached;

- Web servers (computers)

partial unavailability, this can happen because of required / urgent

maintenance or upgrade,

HW or SW failures, back-end (i.e. DB) failures, etc.; in these cases the remaining web servers get too much

traffic and of course they become overloaded.

Overload symptoms

The symptoms of an overloaded Web server are:

- requests are served with (possibly long) delays (from 1 second to a few

hundred seconds);

-

500, 502, 503, 504 HTTP errors are returned to clients (sometimes also

unrelated 404 error or even 408 error may be returned);

-

TCP connections are refused or reset (interrupted) before any content is

sent to clients;

- in very rare cases, only partial contents are sent (but this behaviour

may well be considered a bug,

even if it usually depends on unavailable system resources).

Anti-overload techniques

To partially overcome above load limits and to prevent the overload

scenario,

most popular Web sites use common techniques like:

- managing network traffic, by using:

-

Firewalls to block unwanted traffic coming from bad IP sources

or having bad patterns;

- HTTP traffic managers to drop, redirect or rewrite requests

having bad HTTP

patterns;

-

Bandwidth management and

Traffic shaping, in order to smooth down peaks in network usage;

- deploying

Web cache

techniques;

- using different

domain names to serve different (static and dynamic) content by separate

Web servers, i.e.:

- using different

domain names and / or computers to separate big files from small and medium

sized files; the idea is to be able to fully cache small and medium sized files and to efficiently serve big or huge

(over 10 - 1000 MB) files by using different settings;

- using many Web servers (programs) per computer, each one bound to its

own network card and IP address;

- using many Web servers (computers) that are grouped together so that

they act or are seen as one big Web server, see also:

Load balancer;

- adding more

HW

resources (i.e. RAM,

disks) to each computer;

- tuning OS parameters for HW capabilities and usage;

- using more efficient

computer programs for Web servers, etc.;

- using other

workarounds, specially if dynamic content is involved.

Historical notes

The world's first web server.

In 1989 Tim Berners-Lee proposed to his employer CERN (European

Organization for Nuclear Research) a new project, which had the goal of easing

the exchange of information between scientists by using a hypertext system. As a

result of the implementation of this project, in 1990 Berners-Lee wrote two

programs:

- a browser called WorldWideWeb;

- the world's first Web server, which ran on

NeXTSTEP; NOTE: today, this machine is on exhibition at CERN's public

museum, Microcosm.

The first web server in

U.S.A. was installed on December 12, 1991 at SLAC

[1]

Between 1991 and 1994 the simplicity and effectiveness of early technologies

used to surf and exchange data through the

WorldWideWeb helped a lot to:

- port them to many different

OSs;

- spread their use among lots of different social groups of people, first

in scientific organizations, then in universities and finally in industry.

In 1994 Tim Berners-Lee decided to constitute the World Wide Web Consortium

to regulate the further development of the many technologies involved (HTTP,

HTML, etc.) through

a standardization process.

The following years are recent history which has seen an exponential growth

(become explosive after 2000) of the number of

web sites

and, of course, of the number of Web Servers.

Software

As of July 2007, the most common HTTP serving programs are:[2]

- Apache HTTP Server

Microsoft

Sun

lighttpd

Microsoft is the sum of sites running

Microsoft-Internet-Information-Server, Microsoft-IIS, Microsoft-IIS-W,

Microsoft-PWS-95, & Microsoft-PWS.

Sun is the sum of sites running SunONE, iPlanet-Enterprise,

Netscape-Enterprise, Netscape-FastTrack, Netscape-Commerce,

Netscape-Communications, Netsite-Commerce & Netsite-Communications.

There are thousands of different Web server programs available, many of which

are specialized for very specific purposes, so the fact that a web server is not

very popular does not necessarily mean that it has a lot of bugs or poor

performance.

Statistics

The most popular Web servers, used for public Web sites, are tracked by

Netcraft Web Server Survey, with details given by

Netcraft Web Server Reports.

According to this site, Apache has been the most popular Web server on the

Internet since April of 1996. The January 2007 Netcraft Web Server Survey found

that about 60% of the Web sites on the Internet were using Apache, followed by

IIS with about 30% share.

Another site providing statistics is SecuritySpace ([3]),

which also provides a detailed breakdown for each version of Web server:

[4]

See also

External links

-

RFC 2616, the

Request for Comments document that defines the HTTP 1.1

protocol.

Home | Up | Web hosting service | Web producer | Web server | Webmaster

Web Design & Development Guide, made by MultiMedia | Websites for sale

This guide is licensed under the GNU

Free Documentation License. It uses material from the Wikipedia.

|

|